The promise of generative AI in creative production has largely focused on a single metric: speed. We are told that assets which once took days can now be “hallucinated” into existence in seconds. For content teams under pressure to fill social calendars, refresh ad creatives, and populate web pages, this promise is seductive. However, as the initial novelty of text-to-image generation fades, a structural flaw is emerging in many enterprise workflows. Teams are optimizing for the speed of generation while sacrificing the control required for professional-grade output.

This “speed-first” trap creates a paradox where teams produce more content than ever, yet spend a disproportionate amount of time managing a rising tide of “near-miss” assets. When a workflow is built solely around the prompt, the creative process becomes a lottery. To fix this, teams must shift their perspective from viewing AI as a magic wand to viewing it as a high-precision instrument that requires manual oversight and surgical refinement.

The Fallacy of the “Perfect” Prompt

One of the most common mistakes in modern AI workflows is the over-reliance on prompt engineering as the primary lever for quality. There is a prevailing belief that if you just get the wording right—adding the correct descriptors for lighting, lens type, and style—the AI will eventually deliver a finished product.

In a production environment, this leads to “prompt fatigue.” A creator might spend forty minutes tweaking a prompt to remove a stray object or adjust the tilt of a subject’s head, only to find that each new iteration changes five other things they liked about the previous version. This is the inherent nature of diffusion models; they are probabilistic, not deterministic.

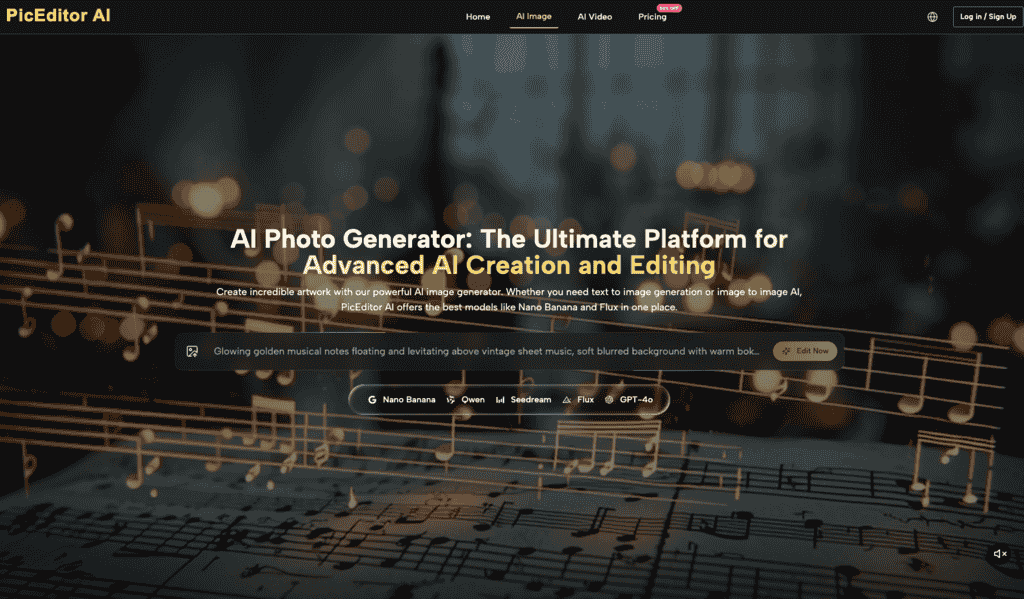

When speed is the only goal, teams often accept “good enough” images that carry subtle AI artifacts or off-brand elements simply because they don’t have the mechanism to fix them without rerolling the entire image. This is where a dedicated AI Image Editor becomes a necessity rather than an afterthought. By moving the focus from the prompt to the edit, teams can lock in the 90% of the image that works and manually direct the remaining 10% toward perfection.

The Hidden Cost of Visual Drift

When speed is prioritized over control, brand consistency is usually the first casualty. Generative AI is remarkably good at creating “vibes,” but it is notoriously poor at adhering to strict brand guidelines across different sessions. A team might generate a series of hero images for a campaign, only to realize upon review that the color temperature shifts slightly between assets or that the product’s proportions vary.

In a speed-first workflow, these discrepancies are often overlooked until the assets are live. This “visual drift” erodes brand equity. Professional design has always been about the intentionality of every pixel. If a team cannot control the specific placement of a shadow or the exact saturation of a brand-specific blue, they are no longer designing; they are merely curating.

The limitation here is not necessarily the AI’s capability, but the workflow’s lack of a “checkpoint” system. Without a way to anchor specific elements of an image while evolving others, the output remains a series of disconnected experiments rather than a cohesive visual language.

Control vs. Generation: Finding the Balance

The most effective creative teams are beginning to distinguish between “generation” and “manipulation.” Generation is the act of bringing something from nothing—the broad strokes of a concept. Manipulation is the act of refining that concept into a usable asset.

Most AI visual workflows fail because they attempt to use the generation tool for manipulation. They try to “prompt away” a mistake. This is inefficient. From an operational standpoint, it is much faster to use an AI Photo Editor to mask an area and regenerate a specific hand, or to use an eraser tool to remove a background artifact, than it is to hope the next prompt gets it right.

We must acknowledge an uncomfortable truth: AI still struggles with spatial logic and fine detail. Whether it is the classic “six-fingered hand” or a bizarrely warped perspective on a table edge, these errors are common. A speed-obsessed team might ignore these, but a control-oriented team views them as the start of the work, not the end. The goal is to spend less time “rolling the dice” and more time in a functional AI Photo Editor environment where specific pixels can be governed.

The “Near-Miss” Inventory Problem

Another pitfall of speed-focused workflows is the accumulation of “near-miss” inventory. When generation is fast and cheap, teams tend to generate hundreds of variations for a single task. This creates a massive bottleneck in the review process.

Creative directors and stakeholders are suddenly buried under a mountain of options, most of which are 95% correct but 100% unusable for production. This creates a new kind of “technical debt” within the creative department. The time saved in the creation phase is swallowed whole by the time required to sort, tag, and reject sub-par assets.

A control-first workflow addresses this by emphasizing quality over quantity at the point of origin. Instead of generating fifty variations, the goal should be to generate three strong foundations and then use an AI Image Editor to refine the best one into its final form. This reduces decision fatigue and ensures that the assets reaching the review stage are actually ready for deployment.

The Limitations of Current Creative AI

It is important to reset expectations regarding what these tools can actually do without human intervention. While the marketing for many AI tools suggests a “one-click” future, the reality of production is more nuanced.

One primary limitation is the AI’s lack of “contextual awareness” regarding physical physics and brand-specific history. An AI does not know that your product cannot physically bend a certain way, or that your brand never uses a specific shade of orange. These are human-level judgments.

Furthermore, we must be cautious about the “genericism” that comes with high-speed AI output. Because these models are trained on vast datasets of existing imagery, their “first guess” is often the most statistically average one. Without a workflow that allows for manual intervention and stylistic steering, a team’s visual output will eventually start to look like everyone else’s.

Operationalizing Control: A New Workflow Blueprint

To move away from the speed-first trap, teams should restructure their visual pipelines around four distinct stages:

- Foundational Generation: Use broad prompts to establish composition, lighting, and subject matter. Do not worry about perfection at this stage; focus on the “bones” of the image.

- Surgical Correction: Use an AI Image Editor to fix specific technical errors. This includes inpainting for anatomical fixes, removing unwanted objects, and adjusting specific textures.

- Global Harmonization: Apply consistent brand filters, color grading, and upscale parameters to ensure the asset matches the broader campaign.

- Human Finalization: A final pass by a designer to ensure that the “soul” of the image is intact and that no obvious AI-isms remain.

This approach may take 20% longer than a “prompt and pray” workflow, but it reduces rework by nearly 80%. It shifts the labor from the frustrating task of trying to communicate with a latent space to the productive task of using an AI Photo Editor to achieve a specific vision.

Conclusion: Precision as the Ultimate Speed

In the world of creative production, true speed is not measured by how fast you can hit “generate.” It is measured by how quickly you can move an idea from a concept to a finished, approved, and published asset.

Teams that obsess over the speed of the initial generation are often the slowest to actually cross the finish line because they are hindered by quality issues, brand drift, and endless cycles of re-prompting. By integrating tools that offer granular control, such as a robust AI Photo Editor or a specialized AI Image Editor, teams can reclaim their creative agency.

The future of AI-driven design is not about replacing the editor; it is about empowering the editor to work with surgical precision. When you stop chasing the miracle prompt and start focusing on the refined edit, you don’t just work faster—you work better. Speed is a byproduct of a controlled workflow, not its primary objective.